I remember sitting very close to the TV as a child and seeing that the image was made up of tiny colored dots, each of which broke down into miniature vertical strips of red, green, and blue when I looked even closer.

Back then, a television was a bulky box that sat on its own stand. Today, screens are so thin that they hang flat on the wall.

At the same time, picture quality seems to improve every few years. Manufacturers promise sharper resolution, brighter images, and richer colors, with labels such as HD, 4K, 8K, OLED, and QLED.

But can television images really keep improving forever? Or are we approaching the limits of what our eyes can actually see?

From bulky boxes to ultra-thin screens

Early televisions used cathode ray tube (CRT) technology. Inside the screen, beams of electrons swept rapidly across a phosphor coating, lighting up tiny points that formed the image.

The process happened so quickly that our eyes perceived a continuous picture. These televisions were bulky and deep, but for decades, they were the standard way of watching TV.

In the early 2000s, flat-panel displays began replacing bulky CRT televisions. Liquid crystal displays (LCDs) allowed screens to become much thinner and lighter, and they made higher-resolution displays easier to produce. However, early LCD TVs often struggled with contrast, particularly when trying to display deep blacks.

A major step forward came with the development of efficient light-emitting diodes (LEDs) that produced blue light, a breakthrough recognised with the 2014 Nobel prize in physics.

Blue LEDs made it possible to create bright white LED light sources — by combining red, green, and blue LEDs — which are used widely to backlight liquid LCDs. This allows more control of the amount of light passing through each pixel.

More recently, technologies such as OLEDs (organic light-emitting diodes) have improved picture quality further.

Unlike LCD screens, where a backlight shines through liquid crystals to create the image, OLED displays allow each pixel to produce its own light. Because individual pixels can be switched off completely, OLED screens can achieve deeper blacks, higher contrast, and more vivid images.

The race for more pixels

Much of the marketing around televisions focuses on resolution or the number of pixels that make up the image. Standard definition television contained only a few hundred lines of pixels.

High definition (HD) increased this dramatically. Then came 4K, which contains roughly four times as many pixels as HD.

Now manufacturers are promoting 8K displays with even more detail. But resolution alone does not determine how good a picture looks.

At typical viewing distances in a living room, human eyesight limits our ability to distinguish individual pixels. For many of us, the difference between a 4K and 8K television may be difficult (or even impossible) to notice unless the screen is extremely large or viewed very closely.

Instead, other factors such as contrast, brightness, color accuracy, and motion handling often have a bigger impact on how realistic an image appears.

Tiny particles, better colors

Some of the biggest improvements in modern displays come from advances in materials science.

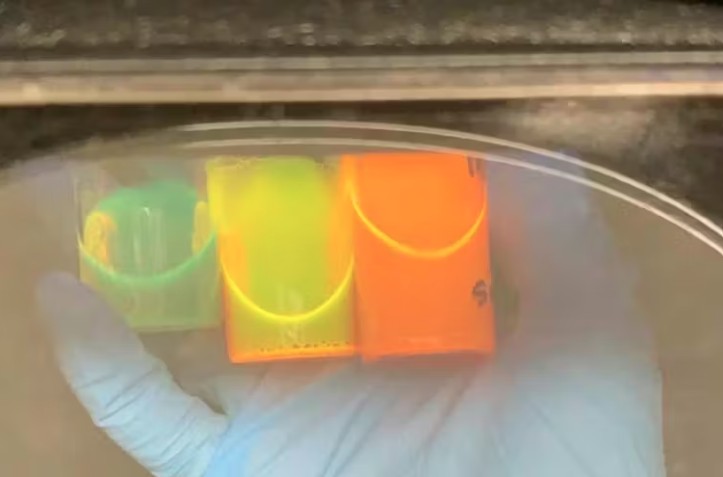

One example is quantum dots: tiny semiconductor particles only a few nanometres across. When light hits them, they emit very specific colors that depend on their size.

Smaller dots produce bluer light, while larger dots produce redder light. This size-dependent behaviour allows the colors to be tuned very precisely, improving the brightness and color range of modern televisions.

Interestingly, the same materials are also used in scientific research. In my own work, we use quantum dots not to improve televisions, but to help detect biological targets.

Because these nanoparticles emit very bright and precise colors, they can act as tiny fluorescent labels that highlight disease markers or pathogens. The same nanoscale properties that make TV colors more vibrant can also help scientists see biological processes more clearly.

Are there limits to how good screens can get?

Even with these advances, displays cannot improve indefinitely. Human vision places some limits. Our eyes can only perceive a certain range of colors and brightness levels, and only resolve a certain level of detail at a given distance.

One study found the average human eye can distinguish 94 pixels per degree of the visual field. In practice, that means you need to be less than two metres away from a 65-inch TV to detect any difference between a 4K screen and an 8K one.

Physics also plays a role. Screens cannot become infinitely bright without becoming uncomfortable, perhaps even unsafe, to watch.

And reproducing every color the human eye can perceive is an enormous technical challenge. This means that while television technology will continue to improve, the most noticeable gains may no longer come from simply adding more pixels.

Instead, future advances may focus on better contrast, wider color ranges, improved motion, and more immersive viewing experiences.

The future of television

Television displays have come a long way from the bulky CRT sets some of us remember. Advances in materials, nanotechnology, and electronics have transformed how images are produced. But as screens approach the limits of what human vision can perceive, the race for ever-higher resolution may begin to slow.

The next big improvements in television may not come from adding more pixels, but from making the ones we already have look even more lifelike. After all, most of us no longer sit close enough to the screen to see those tiny red, green, and blue lines anymore.

Renee Goreham, Associate Professor, Physics, University of Newcastle

This article is republished from The Conversation under a Creative Commons license. Read the original article.

Follow us on X, Facebook, or Pinterest